This Python Vosk tutorial will describe how to convert speech in an mp3 audio file to a json text file. There are 3 steps to this process all of which are detailed in this post.

This is part of a series of posts so if you’re starting here you might want to read the first two posts:

- Which Python Library To Use For Speech To Text Conversion

- How To Setup A Python Speech To Text Environment Using Vosk

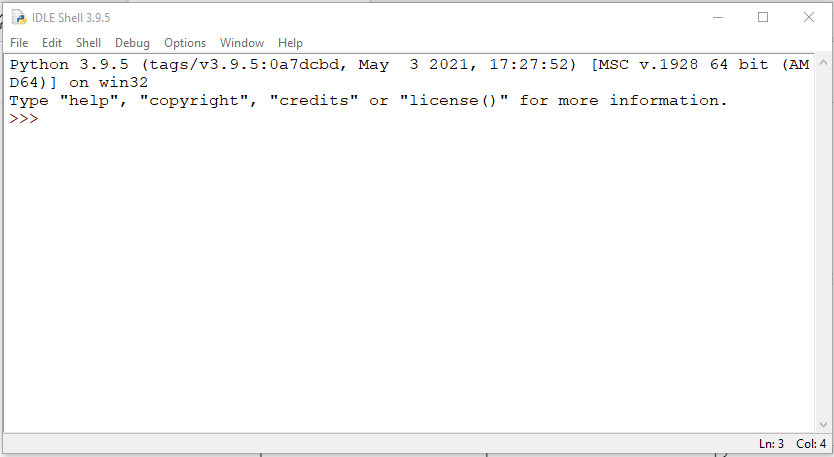

In the post that describes how to set up the environment we created a python virtual environment and a batch file to activate it. On my Windows 10 system when I start the command line window I get a command prompt with the current directory set to my c:\users\xxx where xxx is my windows userid. You can get to the windows command line by searching for command prompt and running the application. The batch file we created was called NLP.bat so if you enter that into the command prompt you should activate your python virtual environment. We also created a batch file for Idle so go ahead and start your Idle python editor.

Step 1: Convert MP3 to WAV File

Once you are in Idle, you can cut and paste the following code into the Idle terminal.

from os import path

from pydub import AudioSegment

# files

src = "C:\\Users\\xxx\\pyenv\\NLP\\inputfiles\\NOAGENDA\\NA-1348-2021-05-20-Final.mp3"

dst = "C:\\Users\\xxx\\pyenv\\NLP\\inputfiles\\NOAGENDA\\NA-1348-2021-05-20-cut60-120.wav"

# start time in seconds

startTime = 60.0

# end time in seconds

endTime = 120.0

# create audiosegment

sound = AudioSegment.from_mp3(src)

# cut it to length

cut = sound[startTime * 1000:endTime * 1000]

# export sound cut as wav file

cut.export(dst, format="wav")

Explanation of Step 1 Python Code

Let’s walk through the code and see what it does.

from os import path

from pydub import AudioSegmentFirst a few imports. The pydub module provides an easy to use api for processing audio files of many formats. If you only work with WAV files pydub does all the work on its own but if you want to work with MP3 or other audio formats pydub uses FFmpeg under the covers. Read more here… The second post in this series described how to install FFmpeg.

src = "C:\\Users\\xxx\\pyenv\\NLP\\inputfiles\\NOAGENDA\\NA-1348-2021-05-20-Final.mp3"

dst = "C:\\Users\\xxx\\pyenv\\NLP\\inputfiles\\NOAGENDA\\NA-1348-2021-05-20-cut60-120.wav"Next we define a source and destination file. In my case I’ve downloaded an episode of the No Agenda podcast which is an mp3 file and 3 hours in length. You can substitute any mp3 file you want or go here and download an episode yourself. The destination file does not need to exist but if it does it will be overwritten with no warning.

# start time in seconds

startTime = 60.0

# end time in seconds

endTime = 120.0

The startTime and endTime variables are set to allow us to extract a slice of the sound file which in my case is 3 hours long. You can skip this if you want to process your entire file. In the example above we are pulling out the second minute of the podcast.

# create audiosegment

sound = AudioSegment.from_mp3(src)

# cut it to length

cut = sound[startTime * 1000:endTime * 1000]

# export sound cut as wav file

cut.export(dst, format="wav")Finally we get to the actual work of the script. The first line creates an AudioSegment object from the file named by the src variable. The “sound” variable contains the entire mp3 file.

The second line creates a variable “cut” that is a slice of the entire “sound” variable. The startTime and endTime variables are multiplied by 1000 as the AudioSegment object represents the sound in millisecond chunks.

For the last step we use the “export” method to save the “cut” portion of the file as a WAV file. This is one of the nice features of pydub – it read in the mp3 file and with a simple method call we can save it as a WAV file.

Step 2: Convert Stereo WAV File To Mono

The Vosk speech to text conversion library requires a mono WAV file as input. In step 1 we used the pydub library to cut out a 60 second slice of our mp3 file and then saved it as a WAV file. The WAV file is in stereo format so now we need to convert it to mono. To do this we take advantage of the audioop python library. Here’s the complete python script. You can copy and past this into a new Idle window to run it.

import wave

import audioop

inFileName = 'C:\\Users\\jsing\\pyenv\\NLP\\inputfiles\\NOAGENDA\\NA-1348-2021-05-20-cut60-120.wav'

outFileName = 'C:\\Users\\jsing\\pyenv\\NLP\\inputfiles\\NOAGENDA\\NA-1348-2021-05-20-cut60-120-Mono.wav'

# read input file and write mono output file

try:

# open the input and output files

inFile = wave.open(inFileName,'rb')

outFile = wave.open(outFileName,'wb')

# force mono

outFile.setnchannels(1)

# set output file like the input file

outFile.setsampwidth(inFile.getsampwidth())

outFile.setframerate(inFile.getframerate())

# read

soundBytes = inFile.readframes(inFile.getnframes())

print("frames read: {} length: {}".format(inFile.getnframes(),len(soundBytes)))

# convert to mono and write file

monoSoundBytes = audioop.tomono(soundBytes, inFile.getsampwidth(), 1, 1)

outFile.writeframes(monoSoundBytes)

except Exception as e:

print(e)

finally:

inFile.close()

outFile.close()

Explanation of Step 2 Python Code

The script above makes use of two python modules named wav and audioop (i.e. they are supplied when you install python so there is no “pip install”).

Step 1 – The first thing the script does is import the wav and audioop modules.

Step 2 – define the input WAV file (should be the same file we output in the previous script. This is the WAV file we produced from the original MP3 file. We also define an output WAV file which will be the mono file.

Step 3 – The rest of the code is inside a try/except/finally block. We use the “wav” module to open the “inFile” wav file for read and the “outFile” wav file for write.

Step 4 – Now define the characteristics of the output wav file. First set the number of channels to 1 with the setnchannels method. This defines the output file as mono. Now set the sample rate and frame width to the same as the input file.

Step 5 – Read the frames from the input file into a variable called soundBytes using the readframes method.

Step 6 – Now use the audioop.tomono method to convert the original WAV file to a mono WAV file.

Here’s the explanation of the tomono method call from the documentation page

audioop.tomono(fragment, width, lfactor, rfactor)

Convert a stereo fragment to a mono fragment. The left channel is multiplied by lfactor and the right channel by rfactor before adding the two channels to give a mono signal.

Step 7 – Using the writeframes method, the mono WAV file is written to disk.

Step 8 – The finally block will now close both the input and output files.

Success – we now have a mono WAV file that Vosk can process from our MP3 stereo file podcast download.

Step 3: Convert The Mono WAV File To Text

Here is the code. Copy and paste this into a new Idle window and run it to produce a json text file from the audio wav file.

from vosk import Model, KaldiRecognizer

import wave

import json

'''

this script reads a mono wav file (inFileName) and writes out a json file (outfileResults) with the speech to text conversion results. It then writes out another json file (outfileText) that only has the "text" values.

'''

inFileName = 'C:\\Users\\xxx\\pyenv\\NLP\\inputfiles\\COURSE\\M1S3-Mono.wav'

outfileResults = 'C:\\Users\\xxx\\pyenv\\NLP\\inputfiles\\COURSE\\M1S3-Results.json'

outfileText = 'C:\\Users\\xxx\\pyenv\\NLP\\inputfiles\\COURSE\\M1S3-Text.json'

wf = wave.open(inFileName, "rb")

# initialize a str to hold results

results = ""

textResults = []

# build the model and recognizer objects.

model = Model(r"C:\Users\xxx\pyenv\NLP\model")

recognizer = KaldiRecognizer(model, wf.getframerate())

recognizer.SetWords(True)

while True:

data = wf.readframes(4000)

if len(data) == 0:

break

if recognizer.AcceptWaveform(data):

recognizerResult = recognizer.Result()

results = results + recognizerResult

# convert the recognizerResult string into a dictionary

resultDict = json.loads(recognizerResult)

# save the 'text' value from the dictionary into a list

textResults.append(resultDict.get("text", ""))

## else:

## print(recognizer.PartialResult())

# process "final" result

results = results + recognizer.FinalResult()

resultDict = json.loads(recognizer.FinalResult())

textResults.append(resultDict.get("text", ""))

# write results to a file

with open(outfileResults, 'w') as output:

print(results, file=output)

# write text portion of results to a file

with open(outfileText, 'w') as output:

print(json.dumps(textResults, indent=4), file=output)

Explanation of Step 3 Python Code

Finally, we can process our WAV file and produce a JSON file with the text generated by Vosk from the audio file.

Step 1 – do a bunch of imports. Note the imports from the Vosk module.

Step 2 – define variables with the input and output file names.

Step 3 – open the input file in read mode using the wave.open method.

Step 4 – create a variable “results” and set it to an empty string. This variable will hold the json data returned by the recognizer as it processes the WAV file. Create an empty list “textResults” which will hold a list of the “text” values from the larger results data.

Step 5 – create the model that will control the speech analysis. The parameter “model” is the folder that contains the model you downloaded as a part of the setup. (See this post) You can specify the entire path as I’ve done here or just the path relative to where the python script is running.

Step 6 – create the recognizer object. This is what translates the audio to text using the supplied model.

Step 7 – now we go into in infinite loop using the “while True” construct (great programming huh). The loop will process it’s way through the entire input file until the readframes method returns a zero length chunk of data. Each chunk of data is processed using the recognizer.AcceptWaveform(data) method. This method will return true when a complete amount of speech has been processed which I think is defined by some amount of silence occurring between words. The documentation is sketchy so I might not be interpreting this correctly. Regardless, when this method returns true you will be able to retrieve a chunk of json that describes each word spoken including start and stop times in the audio file as well as the complete string of spoken words. This is accomplished with the recognizer.Result() method and the json is added to the “recognizerResult” variable which is a string. We then concatenate this to the “results” variable.

The next thing we do is convert the contents of the “recognizerResult” variable into a python dictionary using the json.loads( ) method. This allows us to easily extract the “text” value which is added to a python list.

I’ve commented out the call to recognizer.PartialResult(). If you uncomment these lines you will get a partial result json added to the result variable. This shows you each word interpreted as it works on each chunk of data. Needless to say this is a lot of output and not very useful other than for debugging purposes which is why I have it commented out. Finally, the loop terminates when we get a zero length chunk of data. Once we are outside the loop there is one final call to recognizer.FinalResult() which returns the final piece of json.

Step 8 – Once the processing is complete we save the text string in the “results” variable as a json file.

Step 9 – The last step is to save the list of “text” values as a JSON file. Note the use of the json.dumps( ) method which will convert the python list of “text” values to a pretty print JSON string.

Finally:

Well we’ve completed our first conversion of spoken english words in an MP3 file to a json structure containing each individual word interpreted by Vosk as well as each “sentence” or at least fragment of speech. In the next post we will examine the output json data to see what exactly we get as well as look at the accuracy of the translation.

Previous Post – How To Set Up A Python Environment To Translate Speech To Text Using Vosk

Next Post – Results of Vosk Python Speech To Text Conversion

The Complete App

If you want to go straight to the full solution then check out this complete python application.

Way cool! Some very valid points! I appreciate

you writing this post and also the rest of the website

is also very good.

I think this is among the so much significant info for me.

And i’m glad studying your article. However should remark on some general issues,

The site style is ideal, the articles is in reality great : D.

Excellent process, cheers

I’ve read several excellent stuff here. Certainly value bookmarking

for revisiting. I wonder how much attempt you place to create such

a fantastic informative site.

An outstanding share! I’ve just forwarded this onto a friend who has been doing a little homework on this.

And he actually ordered me dinner due to the fact that I stumbled upon it for him…

lol. So let me reword this…. Thank YOU for the meal!!

But yeah, thanx for spending some time to discuss this subject

here on your web page.

Thanks John for this introduction into speech recognition with python and vosk!

I fiddled with your little tkinter application and was able to output text files which ran through another model for correcting punctuation and Capitalization of words where necessary. I’d like to share this file with backlinking to your work. Can I find it on codeberg or github?

Thanks

Daniel – Thanks for sharing. I’m very interested in how you added punctuation. My next goal is to run the text through NLP software to find concepts which I think will work better with punctuation. Anyway, if you would like to post your code on your blog and link to mine go ahead and I will add a link to your site. I am also going to add a github repository soon.

Thanks John!

I’ll have a blog post about the script and link to this site. If you don’t mind linking to a german site, I’d be pleased.

Regardless of this I’ve created a codeberg repository with my changes to the code you provided. Feel free to check it and especially test it. I’m using the work of Benoit Favre for punctuation, which is also available at the vosk page. Currently I just tested the german file from the vosk page and it works. I tried to link all relevant sources in the repository. If you see something is missing, please let me know and also tell me when your repository is up, so I can link to that.

I’ll have a blog post about the script and link to this site. If you don’t mind linking to a german site, I’d be pleased.

Regardless of this I’ve created a codeberg repository (https://codeberg.org/codade/SpeechToTextGUI/) with my changes to the code you provided. Feel free to check it and especially test it. I’m using the work of Benoit Favre for punctuation, which is also available at the vosk page. Currently I just tested the german file from the vosk page and it works. I tried to link all relevant sources in the repository. If you see something is missing, please let me know and also tell me when your repository is up, so I can link to that.